At Smooth Fusion, we make our clients happy by building them functional, usable, secure, and elegant software. Behind the scenes—helping to make it all possible—we have a modern, secure, and efficient deployment pipeline and hosting setup that we have refined over many years.

The complexity of managing code, deploying code, and hosting websites is surprising to many people. The process involves many different tools and technologies.

Version Control and Code Repository

The programming code, sometimes called source code, that our developers write and maintain is stored in a code repository provided by Atlassian’s Bitbucket. Developers “pull” code out of BitBucket and “push” code back into Bitbucket. Think of Bitbucket like an organized storage closet for source code.

Developers work on a branch of the code on their computer. Branching is a process where a copy of code from a project is copied to a developer’s local computer for the developer to work on. It allows the developer to make changes without changing the master copy. Basically, it allows the developer to experiment with the code without worrying about breaking code that is in the master copy, called the master branch. When the developer is ready to submit their changes, they merge their code into the master branch.

Projects also have a version of the project’s live database that we call the development database, commonly called the dev database. Our developers connect to these SQL databases which are hosted in Microsoft Azure. Connecting to a dev database hosted in Azure saves time that would otherwise be spent creating copies of dev databases onto the developer’s computer. The dev database will have realistic data for the developer to work with, but it is not an exact copy of the live database. Any personally identifiable information is scrubbed from dev databases for privacy reasons.

Committing Source Code and Performing a Build

Merging code into the master branch is called a commit because code is being committed to the main project. As soon as a commit is made to the master branch, a build is triggered. A build is a process where the code and often other elements are converted into software that can be run as a program. After a build, the code is said to be compiled code.

We use a hosted service called AppVeyor to build projects. AppVeyor runs custom scripts to build the solution and package up compiled code and other related files into ZIP files, called NuGet packages, with version numbers. AppVeyor is an example of a Continuous Integration/Continuous Deployment (CI/CD) solution.

AppVeyor spins up a completely fresh cloud-based environment for each build, which ensures that each build will work when deployed to the next stage.

The final step for AppVeyor is to push a versioned NuGet package into a release management and deployment automation tool called Octopus Deploy.

Deployment

The term deployment refers to the steps and processes required to make a website or other software available for use by moving the software from one server to another. Octopus Deploy is an easy-to-use, flexible deployment pipeline that manages releases of new versions, automates complex deployment tasks, and promotes code to hosting environments in Azure or any other environment that can be integrated into Octopus Deploy. We manage the hosting of Octopus Deploy on a Microsoft Azure VM. We have been using Octopus Deploy for about six years and it has been very valuable.

As soon as Octopus Deploy receives a new package from AppVeyor, it auto-deploys the web app code in the package to an Azure Web App development site. On the development site, developers, project managers, quality assurance specialists, and even clients can view changes. When changes are approved on the development site, it is time to move the site into a Production Environment.

Production Environments

A Production Environment is a server environment where a site or other software is in operation for its intended use and by its intended users. "Production Environments" are sometimes referred to as "Live Environments". In Smooth Fusion’s case, we typically have three production environments: staging, warmup, and production (or live).

About our Website Hosting

All our website hosting is done in Microsoft Azure. We use Azure Web Apps and Azure SQL databases hosted in the US East Coast region for all Development sites. Because Development sites are not receiving traffic from the general public, fewer cloud resources are devoted those sites. Our Production Environments are in a separate Azure subscription that we call our Hosting Subscription, and are made up of Azure Web Apps, Azure SQL databases in Elastic Pools, Azure Search services, Service Buses, and Azure Storage. These services are in the US South Central region on high CPU/Memory App Service Plans.

In Production environments, no deployments are automatic because approval is needed to deploy releases to each stage. In Octopus Deploy, we click a button to deploy the NuGet package that was deployed to the Development environment to the Staging site, then to Warmup, and ultimately to Production (or live).

Staging

A Staging site allows our clients and other stakeholders to provide final approval of changes before those changes are pushed to the public-facing live site. Staging is basically an identical setup to the live site that gives all parties a chance to test and approve changes before they are deployed to the live site. Think of staging as dress rehearsal before opening night.

Warmup

When zero downtime during deployments is a client-requirement, we take advantage of a feature called Deployment slots in Azure Web Apps. Deployment slots allow us to deploy the web app to a “slot”, which is a separate website with a separate URL. We call this Warmup. You may also see it referred to as Pre-Production. Once the app is deployed to this warmup deployment slot, using a custom PowerShell script and the sitemap as a guide, we hit every URL (every page) of the site to “warm up” the site by caching the pages in server memory. This makes the site ready to start serving up pages quickly when the public begins hitting the site.

Once we’re ready to take it live, we use the “Swap” feature of Azure Web Apps to move the domain names (the public web address) and any required certificates to the warmup slot, seamlessly directing all traffic with near-zero downtime. Then the warmup slot becomes the new Live site. All of this is automated using custom PowerShell scripts in Octopus Deploy.

Ongoing Website and Application Hosting

While we have put a lot of thought into how we created our deployment process, ongoing secure and reliable hosting is the most important part in the minds of our customers. We use Microsoft Azure and a variety of other tools to provide our customers with world-class hosting.

Azure Web Apps

We believe the Azure Web App infrastructure is perfect for hosting web sites. Azure Web Apps are extremely resilient, scale up in seconds, are very portable, uptime is 99.95%, and they are secure. For more details, read our blog on why we use Azure Web Apps.

Azure SQL Databases

Each website gets its own Azure SQL database. All Production databases are placed in an Elastic Pool. An Elastic Pool is simply a way for all databases to share a large pool of resources, while saving costs for our customers. Azure uses units called DTUs (Database Transaction Units) to measure the “horsepower” of a database. A DTU represents a mixture of CPU, Memory, Data I/O, and Log I/O, but basically it is a measurement of performance and capacity of the database as it accepts and serves up data to a website or application. It would be extremely cost prohibitive to dedicate a high number of DTUs to each database. But with an elastic pool, all databases in the pool share that horsepower for when usage spikes, while consuming a normal amount of DTUs most of the time. Azure SQL Databases allow us to scale space and DTUs quickly, easily, and on-demand. In our experience, the Azure SQL database uptime has been phenomenal, with almost no downtime.

Cloudflare

Cloudflare is a worldwide network of services that increase the security, performance, and availability of websites. Cloudflare is used by tens of millions of sites and we highly recommend it for every site we host.

When a customer opts to use Cloudflare, website traffic runs through the Cloudflare network before reaching Azure. Cloudflare enhances security, performance, and reliability. Below are a few reasons why Cloudflare brings so much value.

- Is the fastest DNS provider in the world, so users reach sites more quickly.

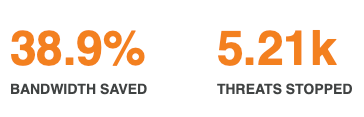

- Can stop a cyber-attack 20x larger than the largest attack ever recorded. Last month, Cloudflare blocked 5210 threats from even making it to our Azure web apps.

- Moves website content closer to users using its content delivery network (CDN), running in over 200 data centers around the world. In some cases, this can cut page load times in half while reducing bandwidth and requests to Azure by up to 60%. Last month Cloudflare saved Smooth Fusion almost 40% in bandwidth by serving content from the Cloudflare CDN.

- Has so many more benefits, like automatic image optimization, auto-minify, improving JavaScript paint time, HTTPS compression, Web Application Firewall, Rate limiting, and Analytics.

Can you tell we are fans of Cloudflare?

Probely

We offer an option to scan websites weekly using Probely. Probely looks for vulnerabilities and security issues, covering OWASP TOP10 and much more. It can also be used to check specific PCI-DSS, ISO27001, HIPAA and GDPR requirements. Check out this ever-evolving list of vulnerabilities Probely detects.

PRTG Network Monitoring Software

For more information about Smooth Fusion’s use of PRTG, see this case study from Paessler.

Additional Monitoring

We are constantly watching monitors in PRTG, Azure, and Cloudflare, looking for ways to optimize performance, increase security, and prevent downtime. As we find problems or new solutions, we work daily with developers and Azure support to resolve and implement items found.

On Call 24/7/365

Our automated monitoring triggers alerts to our team that are addressed 24 hours per day. In addition, we have an after-hours emergency call system that allows our customers to bring issues to our attention. And in addition to the network operations team, Smooth Fusion always has a software engineer on call if an issue requires code changes.